Introduction

Now shaping everything from design to publishing, artificial intelligence quietly shifts how people build digital work everywhere. Not just in studios across Europe but also among startup founders on different continents, smart software helps craft sharp images fast without high costs. Now, machines help artists, sellers, and independent workers, along with learners, get things done faster, spend less on making stuff, and spark new ideas more quickly. Such smart tools break old limits so people can make eye-catching images in seconds instead of long waits.

Fresh out of the digital age comes Midjourney, shaping how we see words turned into images through smart algorithms. This tool breathes life into text, crafting scenes that feel alive with detail. Instead of sketches by hand, it pulls visuals from prompts with surprising clarity. Each output carries a signature blend of imagination and machine precision. Not drawn by pencil nor paint, these creations emerge from patterns learned at a massive scale. What once took artists hours now unfolds in moments, guided only by description.

Not everyone notices, yet Midjourney V2 shifted how artificial intelligence creates images. Arriving in April 2022, it moved results from rough trials into real-world visuals people could actually use.

Understanding AI image creation means getting into Midjourney V2 – there’s just no skipping it. To really get good at shaping prompts, this version lays the groundwork. While newer models exist, they build on what came here first. Skipping past it leaves gaps. The way images form from words started taking shape in this iteration. Anyone serious about control and clarity will circle back to these basics again and again.

What is Midjourney V2?

Picture-making gets sharper in Midjourney V2, the upgraded version that turns words into visuals with better shape and detail. This next step refines how descriptions become scenes, focusing on cleaner output through smarter processing behind the scenes.

In simple terms:

A picture forms when you explain a thought in sentences. The machine swaps your phrases for shapes and colors. What changed with Version 2 wasn’t small – it handled prompts better, looked more consistent, yet worked far more dependably than before.

Why V2 Matters

That version of Midjourney changed things. Suddenly, pictures made by machines weren’t just odd curiosities – they started helping people get real work done. A shift happened quietly, without announcements, where outputs moved from screensavers to storyboards. Useful in ways earlier models never were. Not magic, just better at matching what a person imagined. The gap between thought and image got smaller here.

Key Advantages:

- Last time out, the results actually worked well enough to use. Early attempts never made it past testing

- Enhanced image consistency

- Improved understanding of prompts

- More structured and predictable results

Key Insight:

Folks started trusting AI creations once Midjourney V2 rolled out, turning chaotic outputs into something predictable. That shift? It opened doors – artificial smarts weren’t just doodling anymore but doing actual work people could count on.

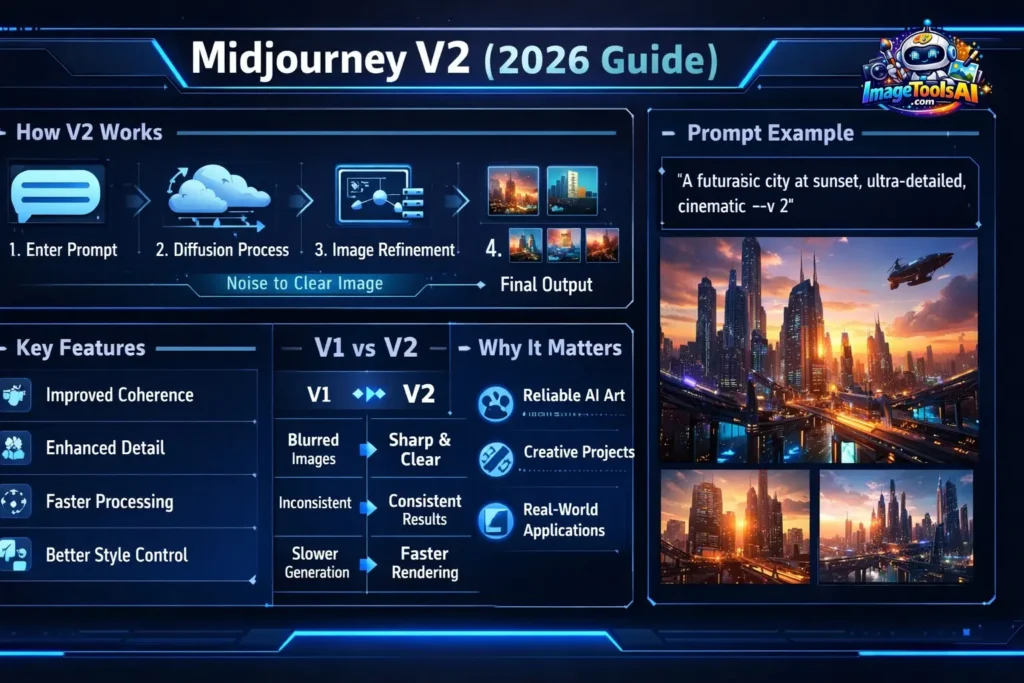

How Midjourney V2 Works

Even if Midjourney V2 uses advanced algorithms, breaking it down helps clarity. Yet each part connects through design choices made early on. Still, users see only what appears after layers finish processing. So behind the scenes runs code shaped by training data patterns. While outputs seem instant, timing depends on server loads, too. Because inputs guide results, prompts shape everything that follows. Though details stay hidden, responses reflect vast image collections studied before.

1. Diffusion Models

The core technology behind Midjourney V2 is based on diffusion processes.

- The AI begins with random visual noise

- From a fuzzy start, it slowly sharpens the static into clear forms

- Each iteration enhances clarity and structure

2. Natural Language Processing

Finding meaning in what people type happens through smart language processing tricks

- Reads and analyzes the prompt

- Identifies context, objects, and styles

- Converts language into visual instructions

3. Denoising Process

During generation:

- Little by little, sound fades away

- Patterns and details are introduced

- The image becomes sharper with each step

Simple Workflow

- User inputs a prompt

- What you describe gets understood by artificial intelligence

- Random noise is generated

- Refinement begins step-by-step

- The picture shows up after everything finishes

Key Features of Midjourney V2

1. Improved Image Coherence

Outputs became less chaotic and more logically structured:

- Better composition

- More natural object placement

- Reduced visual randomness

2. Enhanced Detail Quality

Precision took a noticeable step up in the Images shown

- Sharper textures

- Cleaner outlines

- Better lighting effects

3. Better Style Interpretation

Midjourney V2 could better replicate artistic styles:

- Recognizes creative genres

- Produces consistent aesthetics

- Maintains stylistic integrity

4. Faster Processing

Performance improvements included:

- Reduced generation time

- More efficient rendering

- Faster iteration cycles

5. Smarter Prompt Handling

Now it reacts faster when you do something. Input shows changes without delay. Every move feels smoother than before. There is less waiting after a command. Actions line up closely with what you give it

- Improved contextual understanding

- Better alignment with user intent

- More accurate visual output

Benefits & Use Cases

A fresh wave of tools emerged when Midjourney V2 arrived, sparking shifts especially in Europe’s design studios and ad firms. While some teams hesitated, others began testing visual workflows overnight. From Berlin to Lisbon, artists found quicker paths through brainstorming walls. Not every company adapted fast – yet a quiet transformation took root in how images were built and shared. The ripple spread without fanfare, altering small routines that added up.

For Designers

- Event posters for cities like Paris or Milan

- Conceptual artwork for agencies

- Branding and identity visuals

For Marketers

- Social media creatives

- Advertising campaigns

- Product visualizations

Freelancers

- Fiverr design services

- Logo creation

- Client-based visual projects

For Students

- Academic presentations

- Creative assignments

- Visual storytelling

Still, by 2026, studying Version 2 builds better skills for crafting prompts along with a core understanding of how AI works.

How to Use Midjourney V2

Join Discord

Midjourney operates through Discord servers.

Enter Command

Use the command:

/imagine your prompt here –v 2

Generate Images

- Wait a few seconds

- Four versions come out of the machine. Each one differs from the next. A different path shapes every result. One follows after another without repeating

Choose Options

- U (Upscale): Improves resolution

V Variations Create Different Versions

Download Output

Start by picking the picture that fits what you need. Then hold on to it once found. Sometimes one stands out more than others. That is probably the right choice. Keep it safe for later use.

Start clear, stay narrow – tight wording sharpens outcomes. Skip extra words; precision pulls weight. A prompt trimmed close hits harder. Less drift means better direction. Focus early, get what matters faster.

Midjourney V2 vs V1 Pros

- Significant improvement over V1

- User-friendly interface

- Faster rendering speed

- Better prompt interpretation

Cons

- Not quite as true to life compared to later models

- Weak text generation

- Limited customization

- Occasional issues with anatomy

Best Alternatives to Midjourney V2

1. Stable Diffusion

- Open-source

- Highly flexible

2. DALL·E

- Strong language understanding

- Beginner-friendly

3. Leonardo AI

- Ideal for gaming assets

- Easy to learn

4. Adobe Firefly

- Professional-grade tool

- Working alongside tools used by designers

Comparison Table

| Tool | Best For | Ease of Use | Pricing |

| Midjourney V2 | Artistic visuals | Medium | Paid |

| Stable Diffusion | Custom workflows | Advanced | Free |

| DALL·E | Simplicity | Easy | Paid |

| Firefly | Designers | Easy | Paid |

Tips to Get the Best Results

- Write clear prompts

- Focus on one concept

- Add lighting keywords

- Experiment with variations

- Keep descriptions concise yet detailed

How to Use AI Tools Effectively

- Start with simple inputs

- Gradually add complexity

- Test multiple outputs

- Learn from iterations

Advanced Prompt Engineering Tips

Use Structure

Subject → Style → Mood → Lighting → Details

Add Power Words

- cinematic

- ultra-detailed

- realistic

- 4K

- soft lighting

Avoid Mistakes

- Overloading prompts

- Using vague language

- Missing key details

Europe Relevance

Midjourney and similar AI tools are used widely in Europe

- 🇩🇪 Germany → Digital agencies

- 🇫🇷 France → Creative studios

- 🇪🇸 Spain → E-commerce brands

- 🇬🇧 UK → Freelancers

Understanding Midjourney V2 strengthens global AI proficiency.

FAQs

Using –v 2 makes access possible again

Fees keep it running instead of free access.

Older models feel less lifelike compared to recent ones. Features have evolved quite a bit since then

Built on a base that strengthens how you shape prompts. Skills grow steadily when the core’s clear

Lower realism

Weak text rendering

Limited control

Conclusion

Still standing tall, Midjourney V2 isn’t the latest model out now, yet it marks a turning point in how AI has evolved. Though newer versions exist, its impact lingers stronger than many expected.

From that point on, pictures made by machines started working well enough to trust them. They began fitting into real jobs without constant fixes. Businesses found ways to use these images because they stopped failing so often.

If you want to master AI tools:

- Learn the fundamentals

- Understand prompt behavior

- Study Version 2 deeply

Because:

Midjourney V2 is where the real journey begins.

Right now could be the moment you begin learning about AI. Staying ahead tomorrow often begins with small steps taken today.