Introduction:

Out of nowhere, artificial intelligence changed how people create online stuff. Fancy programs cost a lot. Buying ready-made graphics added up fast. Most folks needed serious training just to start. These days? A total flip. Type some words. Get an image back instantly. Smart algorithms behind the scenes do the heavy lifting now.

Not long ago, only specialists could shape such visuals; now, anyone can. Entire fields feel the shift – marketing leans on it, bloggers use it, brands rebuild with it. Game creators sketch faster, and online shops show products differently. What once took hours happens fast, reshaping how ideas become real.

One of the first big names in this shift was DALL·E 2, built by OpenAI. Starting things off, it brought people their first real look at making images from written prompts. Yet its impact went beyond just technology – shaping how we interact with creative tools.

By 2026, the world of artificial intelligence had shifted in deep ways. Picture-making systems like DALL·E 3, MidJourney V6, and Stable Diffusion XL took over most expert tasks – thanks to sharper detail, smarter reading of instructions, plus wider room for originality.

This raises an important analytical question:

Is DALL·E 2 still relevant in 2026?

This comprehensive guide answers that question in depth. You will learn:

- Core features and capabilities of DALL·E 2

- Advanced prompt engineering techniques (NLP-based)

- Real-world applications in digital industries

- Technical limitations in modern AI environments

- Comparison with newer AI image generators

- Pricing and accessibility structure

- Step-by-step usage workflow

- SEO and marketing optimization strategies

- Best alternative tools in 2026

This article is written in simple, structured English with NLP-rich semantic optimization, making it suitable for beginners, marketers, bloggers, and AI enthusiasts.

What is DALL·E 2?

A picture-making machine powered by smart software responds to written words. Imagine typing thoughts, then watching them appear as scenes. This tool builds visuals using only the descriptions people write. Instead of drawing by hand, someone gives directions in plain speech. What comes out looks like art made from sentences.

DALL-E 2: Three Key Transformations From An NLP Perspective

- Text Encoding (Semantic Understanding)

The model interprets human language and converts it into machine-readable embeddings. - Concept Mapping (Visual Association Layer)

It connects linguistic concepts (objects, actions, attributes) with learned visual patterns. - Image Synthesis (Diffusion-Based Rendering)

The system gradually constructs an image through iterative refinement.

Example:

Prompt:

“A futuristic cyberpunk city glowing at night with flying vehicles”

Output:

A synthetic digital artwork representing a neon-lit metropolitan environment.

Core Working Mechanism of DALL·E 2

DALL·E 2 operates using a combination of:

- Diffusion Models

- Neural Networks

- Latent Space Representation

- Contrastive Language-Image Pretraining (CLIP)

Natural Language Processing

The system analyzes input text and breaks it into semantic components:

- Objects (city, car, person)

- Attributes (futuristic, red, glowing)

- Environment (night, forest, studio lighting)

Latent Space Mapping

The prompt is converted into a multidimensional vector space where meaning is preserved mathematically.

Diffusion Process

Random noise is gradually transformed into structured visual content.

Iterative Refinement

The model enhances:

- Sharpness

- Lighting

- Composition

- Object consistency

Final Image Output

A fully generated image is delivered based on prompt interpretation.

Key Features of DALL·E 2

Even in 2026, DALL·E 2 retains several foundational capabilities that remain useful for basic and educational purposes.

Text-to-Image Generation

Transforms natural language into images using semantic interpretation.

Example:

“A luxury sports car parked under neon lights in Tokyo”

Style Adaptability

Supports multiple artistic styles:

- Photorealism

- Digital illustration

- Oil painting aesthetics

- Cartoon rendering

- Abstract visual generation

Image Variation Engine

Generates multiple interpretations of a single prompt, enabling:

- Concept exploration

- A/B testing for ads

- Creative brainstorming

Inpainting

Allows selective modification of image regions:

- Object removal

- Background replacement

- Color adjustments

- Detail correction

Rapid Concept Visualization

Ideal for early-stage ideation:

- Blog thumbnails

- Social media creatives

- Storyboarding concepts

DALL·E 2 Prompt Engineering Guide

Prompt engineering is the process of designing structured natural language inputs to optimize AI output quality.

Basic NLP Prompt Structure

A high-quality prompt includes:

- Subject entity

- Visual style descriptor

- Lighting conditions

- Environment Context

- Emotional tone

Cinematic Prompt Example

“A cinematic aerial shot of Paris at sunrise, golden volumetric lighting, ultra-realistic rendering, 8K detail, shallow depth of field”

Commercial Marketing Prompt

“Luxury skincare bottle on marble surface, soft studio lighting, premium branding aesthetic, high-end product photography style”

Artistic Prompt Example

“Impressionist painting of a quiet riverside village at sunset, soft brush strokes, warm color palette, artistic expressionism”

NLP Prompt Optimization Techniques

To improve output quality:

- Use descriptive adjectives (luxury, cinematic, surreal)

- Add contextual modifiers (at sunset, in fog, neon-lit)

- Define camera perspective (close-up, aerial view, macro shot)

- Specify artistic domain (photography, painting, 3D render)

Use Cases of DALL·E 2 in 2026

Even with newer tools available, DALL·E 2 remains relevant for certain workflows.

Blogging & Content Creation

- Featured blog images

- Concept illustrations

- Article visualization assets

Social Media Marketing

- Instagram posts

- Facebook advertisements

- Pinterest visual boards

E-Commerce Industry

- Product mockups

- Branding prototypes

- Ad creative testing

Educational Content

- Visual learning aids

- Slide presentations

- Concept diagrams

Creative Development

- Game concept design

- Character ideation

- Storyboarding

Limitations of DALL·E 2 in 2026

Limited Semantic Accuracy

It struggles with:

- Multi-object relationships

- Complex scene structuring

- Precise spatial reasoning

Outdated Architecture

Newer systems outperform it in:

- Context awareness

- Prompt interpretation

- Visual realism

Restricted Creative Control

- Prompt weighting

- Style blending controls

- Fine-grained composition editing

Inconsistent Outputs

Identical prompts may generate:

- Different compositions

- Variable object placement

- Inconsistent styling

Not Ideal for Professional Production

Modern industries prefer advanced tools for:

- Advertising campaigns

- Brand identity systems

- Commercial-grade visuals

DALL·E 2 vs Modern AI Tools

| Feature | DALL·E 2 | DALL·E 3 | MidJourney V6 | Stable Diffusion XL |

| Prompt Understanding | Medium | Very High | High | High |

| Visual Realism | Medium | High | Very High | High |

| Creative Control | Low | Medium | High | Very High |

| Professional Use | Limited | High | Very High | High |

| 2026 Relevance | Low | Very High | Very High | High |

Key Insight:

DALL·E 2 is now primarily used for educational purposes and basic experimentation.

Pricing Overview

DALL·E 2 historically followed a credit-based pricing model:

- Free trial credits (limited usage)

- Pay-per-image system

- API-based pricing for developers

Pricing structures may vary depending on platform integration and API access.

Step-by-Step Guide: How to Use DALL·E 2

Write a Structured Prompt

Define subject, style, lighting, and environment.

Input Prompt

Enter text into the AI interface.

Generate Image

System processes prompt using the diffusion model.

Refine Output

Adjust language, add details, or modify descriptors.

Download Final Image

Export image for use in projects.

Best Alternatives to DALL·E 2

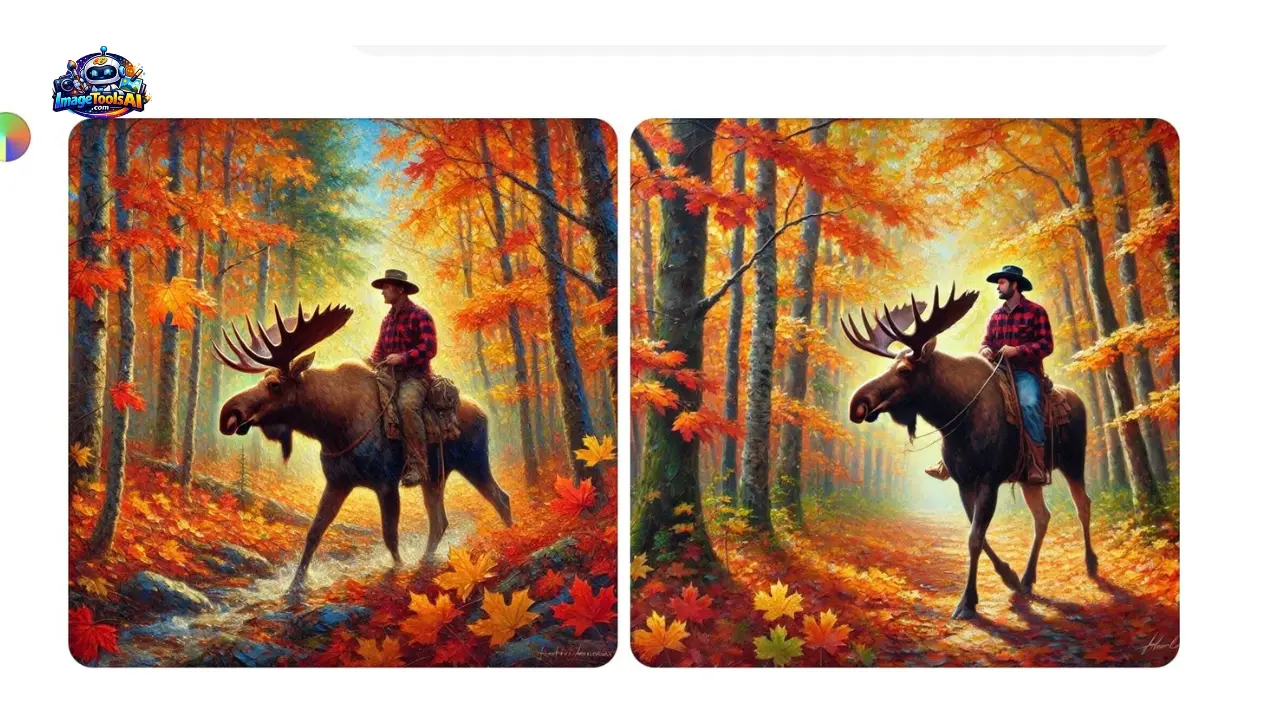

MidJourney V6

Best for:

- Cinematic visuals

- High realism

- Artistic creativity

DALL·E 3

Best for:

- Accurate prompt interpretation

- Clean commercial visuals

Stable Diffusion XL

Best for:

- Full customization

- Open-source flexibility

Adobe Firefly

Best for:

- Professional design workflows

- Marketing and branding

Canva AI

Best for:

- Social media creators

- Fast design production

SEO Strategy Section

To rank this topic effectively, implement NLP SEO strategies:

Primary Keywords

- DALL·E 2 guide 2026

- AI image generator

- text to image AI

- DALL·E prompts

LSI Keywords

- AI art generator

- generative AI tools

- MidJourney vs DALL·E

- AI design tools 2026

Content Optimization Techniques

- Use long-tail keywords

- Add comparison tables

- Include prompt examples

- Use FAQ schema

- Maintain semantic keyword density

FAQ

A: Yes, but mainly for learning, experimentation, and basic image generation.

A: It offers limited access, but advanced usage typically requires payment.

A: DALL·E 3 and MidJourney V6 are significantly more advanced.

A: Yes, but modern tools provide better commercial-grade outputs.

A: Limited prompt accuracy and outdated image generation quality.

Conclusion

One step forward came with DALL·E 2, shaping how machines understand images through words. Because of it, people everywhere began turning sentences into visuals, slowly building what now powers many modern Image Systems.

Efficiency dips just as power begins lagging behind newer options. Performance-wise, it simply can’t keep up anymore.

Starting? This can be a handy first step toward creative work with artificial intelligence.

- MidJourney V6

- DALL·E 3

- Stable Diffusion XL

Final Insight

The future of content creation belongs to those who understand and leverage AI tools effectively. Mastering prompt engineering and generative design is now a critical skill for digital success.